Every scene in the previous chapters is the same shape: a small Cornell-style room, a few hand-placed objects, one spherical light hanging above. The renderer has correct global illumination, a physically based material model, transmission, importance-sampled light connections — but it has only ever been asked to produce a few thousand triangles lit by a single emitter.

The destination of this series is the opposite of that. San Miguel — a Mexican courtyard with stone arches, hanging lanterns, dappled tree canopy, and dining tables — is roughly ten million triangles across 287 distinct materials. Lumberyard Bistro adds a multi-level café interior and a streetfront with strung-up holiday lights. Neither has a single hand-placed sphere light: in San Miguel, the sun and sky are an HDR environment map, and the night-time illumination comes entirely from dozens of small emissive geometry pieces — wall lanterns, chandeliers, window panels — scattered through the scene.

Reaching that kind of image is more an infrastructure problem than a math problem. The path-tracing loop that converged a Cornell box is the same loop that converges San Miguel. What changes is everything around it: how lights are sampled, how scenes are loaded, how materials carry textures and tangents, and how a renderer that takes minutes per frame can be iterated on at all.

The sky as a light source

The single overhead sphere light is gone. In its place, a full HDR environment map — an equirectangular panorama in linear floating-point RGB — surrounds the entire scene. When a ray escapes to infinity (no geometry hit), its direction looks up the map’s radiance:

if (useIBL)

return iblIntensity * envMap->lookup(ray.direction);The lookup converts a direction to (u, v) coordinates with horizontal wrap and vertical clamp, then bilinearly filters the four neighboring pixels for a smooth result. That’s the easy half — drawing the sky behind the scene.

The harder half is sampling the sky as a light. A naive uniform-hemisphere sample wastes most of its rays on dark sky regions. For a partly-cloudy daytime HDRI, almost all the energy is concentrated near the sun disc and the bright horizon — and a sampler that doesn’t know that will spend its budget mostly on black.

The fix is to importance-sample the environment map by its luminance. A 2D cumulative distribution function (CDF) is built once at load time. Each pixel’s weight is its luminance multiplied by sin(θ) to correct for the equirectangular projection’s distortion (rows near the poles cover less solid angle than rows near the equator):

float theta = PI * (y + 0.5f) / height;

float sinTheta = sin(theta);

float lum = 0.2126f * r + 0.7152f * g + 0.0722f * b; // Rec. 709

float weighted = lum * sinTheta;A conditional CDF is built per row — given a row, sample a column proportional to luminance — and a marginal CDF over rows. Sampling is then two binary searches (std::lower_bound): one in the marginal CDF to pick a row, one in the chosen row’s conditional CDF to pick a column. The pixel coordinates convert back to a spherical direction, and the sampling PDF accounts for the area-to-solid-angle Jacobian:

float pdfUV = marginalPDF[y] * conditionalPDF[y][x] * width * height;

result.pdf = pdfUV / (2.0f * PI * PI * sinTheta);Both connections — the explicit shadow ray toward a sampled environment direction, and the implicit BRDF ray that escapes to infinity — apply the power heuristic from the previous chapter against the BRDF PDF. A bright sun region gets sampled often by the IBL; a dark cloud region gets sampled rarely. Convergence on outdoor scenes accelerates dramatically.

The same Cornell-style scene from earlier chapters, illuminated by a sky instead of a sphere light. The strong directional cast and warm sky fill come for free from the HDR map.

The same scene with the environment rotated 180°. The IBL lookup applies a rotationOffset at sample and lookup time, so the sky can be turned about the Y axis without rebuilding the CDF.

The Cornell-style scene is the easiest possible IBL test: diffuse walls, a handful of simple shapes. The sky is never asked to interact with anything difficult. To find out whether IBL survives a real authored model, the spheres from earlier chapters are replaced with PBR-textured props — a marble bust, a wooden elephant, a metal lion-head — each carrying its own diffuse, specular, and normal maps.

The simple scene proved IBL was directionally honest; the complex models prove it is also material-honest. The metal’s reflective response tracks the rotated sky’s color, the dielectric roughness lobes shift their highlights to follow the new sun direction, and the normal-mapped surfaces re-occlude themselves under the rotated key light — all of it from the same single CDF lookup. With IBL working on real materials in a contained scene, the renderer is ready to face one that does not fit in memory by default.

The infrastructure for a scene this large

This is the scene the renderer is being asked to hold. Roughly ten million triangles, two hundred and eighty-seven materials, hundreds of small lights embedded in the geometry as emissive triangles, and a tree canopy whose leaves are alpha-cutouts. The path-tracing loop is the same one from chapter four — but the renderer has never had to load anything like this, never had to keep it resident across thousands of passes, and never had to let an artist tune lighting in any reasonable amount of time.

Loading San Miguel through Assimp on a cold start takes about 12 minutes. The Bistro takes around 5. Most of that is mesh parsing, normal generation, and texture decoding — all of it deterministic, all of it independent of the renderer’s actual state. Re-running it on every iteration is unworkable.

A SceneCache serializes the fully parsed scene — every MeshTriangle, the flattened CachedMaterial structs, the raw RGBA texture pixels — to a single binary file. Cache validity is keyed on the OBJ file’s size and modification timestamp:

if (header.objFileSize != (uint64_t)objSize ||

header.objModTime != (uint64_t)objTime)

→ rebuildOn a cache hit, the entire load bypasses Assimp:

if (SceneCache::isCacheValid(cachePath, objPath)) {

SceneCache::load(cachePath, shapes, materials, areaLight, arlEmitScale);

} else {

LoadAssimpFile(objPath, modelTr);

SceneCache::save(cachePath, shapes, allMats, objPath);

}San Miguel cold-starts in 12 minutes; warm-starts in under 3 seconds. For a project where iteration matters more than absolute throughput, that gap is the difference between “iterating” and “waiting.”

The meshv2 scene command pulls in the full PBR material set from each MTL file — diffuse, specular, alpha, bump, opacity, emissive — and produces MeshTriangle instances carrying per-vertex smooth normals, UVs, and tangents. Alpha-cutout testing happens during intersection itself: hits below the alpha threshold are discarded before an Intersection is even returned, which is what makes scenes with foliage and decorative cutouts work without a separate material type. Tangent-space normal mapping is sampled in the shading step using the per-hit tangent passed up through the intersection record.

Beyond loading, iterating on lighting also needs to be cheap. The render loop accepts a shouldReloadSceneParams flag on each pass: when set, it re-reads camera.txt mid-render without restarting:

x y z ry yaw pitch [iblIndex] [iblRot] [iblInt] [emitScale]Camera position, orientation, IBL index (for instant hot-swap between preloaded environments), IBL rotation, IBL intensity, and area-light emission scale can all be edited in a text file and applied to the next batch of rays. Combined with the binary scene cache, the iteration loop becomes: edit camera.txt, save, watch the render update. Switching between a noon and a midnight HDRI on the same scene takes no reload.

The room itself as a light source

San Miguel’s lanterns and chandeliers are not abstract sphere lights — they’re meshes embedded in the OBJ file, with Ke (emissive) materials marking which triangles glow. There can be dozens of them scattered across the scene, no two alike, and the renderer has no idea which is which until load time.

Rather than registering each fixture as a separate light, the loader aggregates every emissive triangle found in the scene into a single unified AreaLight. As each MeshTriangle is created, its material is checked:

if (mat->isLight()) {

scene->areaLight.addTriangle(tri);

scene->areaLight.emission = mat->Kd;

}After all triangles are registered, an area-weighted CDF is built — same idea as the IBL CDF, but now the weight is each triangle’s surface area:

for (size_t i = 0; i < triangles.size(); i++) {

totalArea += triangles[i]->area();

cdf[i] = totalArea;

}

// Normalize to [0, 1]Sampling the area light is a single std::lower_bound to pick a triangle proportional to its area, then a uniform sample on the chosen triangle using the square-root barycentric mapping:

float su = sqrt(e2);

float bary_u = 1.0f - su;

float bary_v = e3 * su;The PDF is 1 / totalArea — uniform over the total emissive surface. This works regardless of how many fixtures there are or how they’re distributed. A scene with one chandelier and a scene with fifty wall sconces are sampled by the same code path.

An arlEmitScale multiplier is applied at load time, allowing the brightness of all scene-embedded lights to be balanced against the sky IBL without modifying the OBJ file:

Cleaning what 250 passes can’t

Path tracing converges quickly with importance-sampled IBL and area lights, but Monte Carlo noise at 250 passes is still visible — especially in regions lit only by indirect bounce, where shadows from arches block direct light entirely and only the multiply-bounced contribution survives. Pushing pass counts to thousands resolves this for static images but makes movie rendering impractical.

The compromise is offline denoising via Intel Open Image Denoise (OIDN) — a machine-learning denoiser trained on physically rendered images. Rather than integrate it into the C++ renderer, denoising runs as a separate pass on saved HDR frames using oidnDenoise from the command line. The result is comparable to a much higher pass count in directly lit regions and merely smoothed in regions that were already too noisy to reconstruct.

At 250 passes, the denoiser handles the directly-lit courtyard well, and its biggest visible improvement is on the indirectly-lit interior under the arches — those regions receive almost no direct sky and are still noisy at this pass count. OIDN fills them in cleanly.

At 25 passes the denoiser has too little signal to work with. The lantern falloff zones smear, because the boundary itself isn’t yet defined enough for OIDN to reconstruct.

At 50 passes the boundary between lantern fixture and dark falloff begins to define itself, and OIDN begins preserving — rather than smearing — the high-contrast transition. Indirect-only regions deeper under the arches are still ambiguous to the network.

At 100 passes the same scene resolves cleanly. The light-to-dark transitions around each fixture are now sharp enough that OIDN preserves them rather than averaging across them.

The same trade-off holds in the Bistro exterior, where the daytime image is much closer to converged at lower pass counts:

At 25 passes the denoiser smears these regions, losing legibility — the network is averaging across what should be a sharp text edge or a curved specular highlight, because there aren’t enough samples for either to be defined yet.

By 100 passes the cobblestones, signage, and string-light bulbs are all sharp enough that OIDN refines rather than reconstructs. The lesson is consistent: the denoiser’s job is to interpolate between adjacent samples, and it can only do that well when the underlying signal already has enough samples to define structure. It is not a substitute for sample count; it’s a multiplier on it.

Galleries — San Miguel

San Miguel’s scenes are rendered at 250 passes and denoised. Day and night versions of each viewpoint use identical camera parameters and only swap the IBL — so the comparison is genuinely about light, not framing.

The main courtyard from beneath the tree canopy. By day, partly-cloudy IBL rakes across the stone floor and dining tables; the colonnade falls into soft shadow. The tree’s alpha-cutout foliage filters the light into dappled patterns on the ground. At night, the same view is lit entirely by lanterns along the arcade against a starlit sky — the IBL contributes only a faint ambient fill.

An alternate angle along the colonnade. Daytime sun catches the upper balcony with its hanging planters and casts long shadows across the white stucco facade. At night the wall lanterns and upper chandeliers cast warm pools across the columns, with the warm-to-cool gradient from the lit arcade to the dark courtyard beyond.

A long-shot from inside the arcade, camera tilted up to take in the full height of the courtyard. By day, the bright sky and dappled tree canopy fill the upper half of the frame while the deep arcade falls into shadow against it — a wide dynamic range that the importance-sampled IBL handles in a single converged pass. At night the relationship inverts: the lanterns along the arcade light the near columns and beamed ceiling, while the open courtyard beyond drops to near-black under only faint starlight.

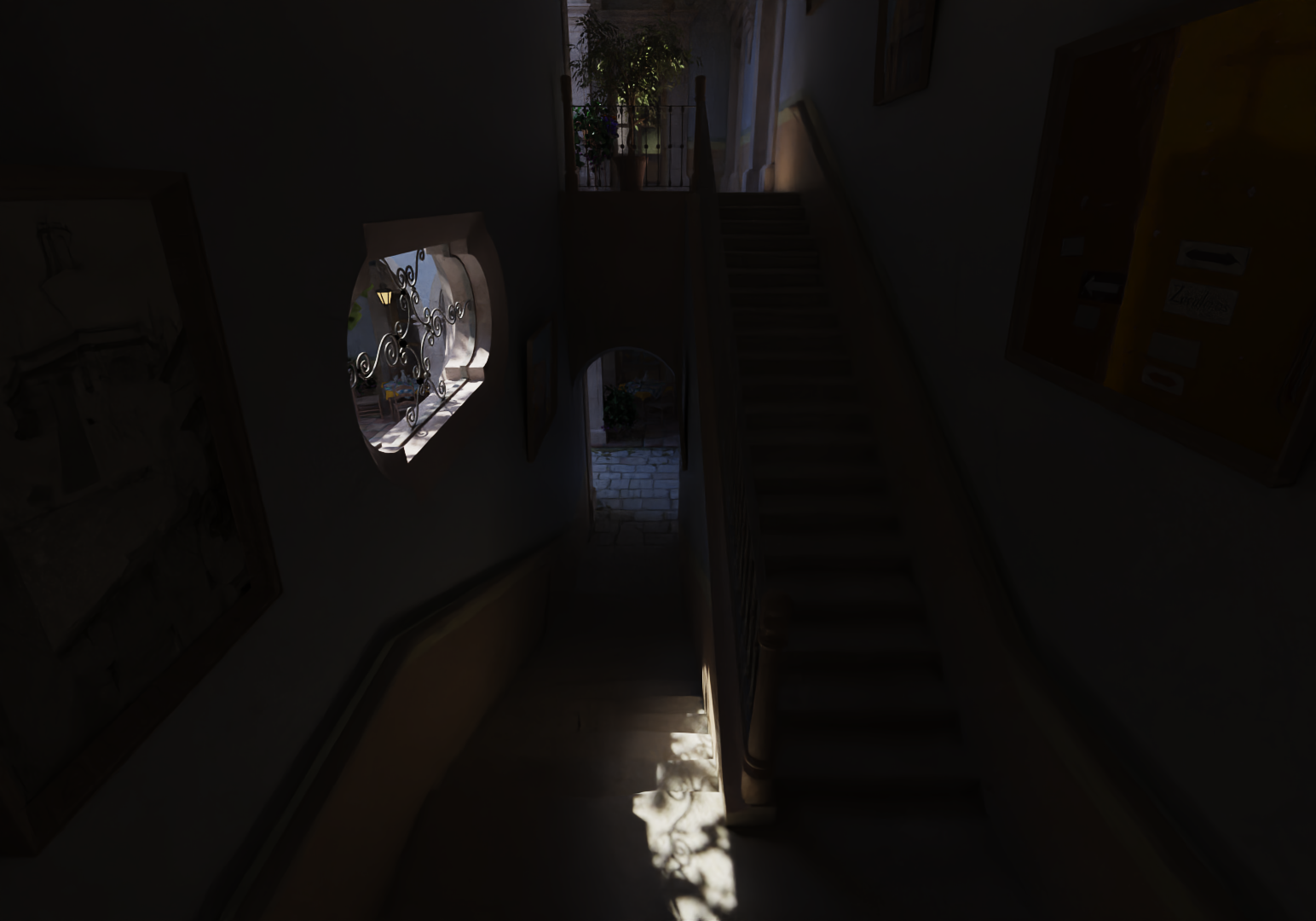

The upper walkway corridor. Afternoon light streams through the open arches onto the wooden table, tile floor, and framed paintings — a strong one-point perspective that resolves fine geometric detail across a long depth range. At night the wrought-iron chandeliers take over as the sole light source, illuminating the beamed ceiling while the arches frame a starlit sky.

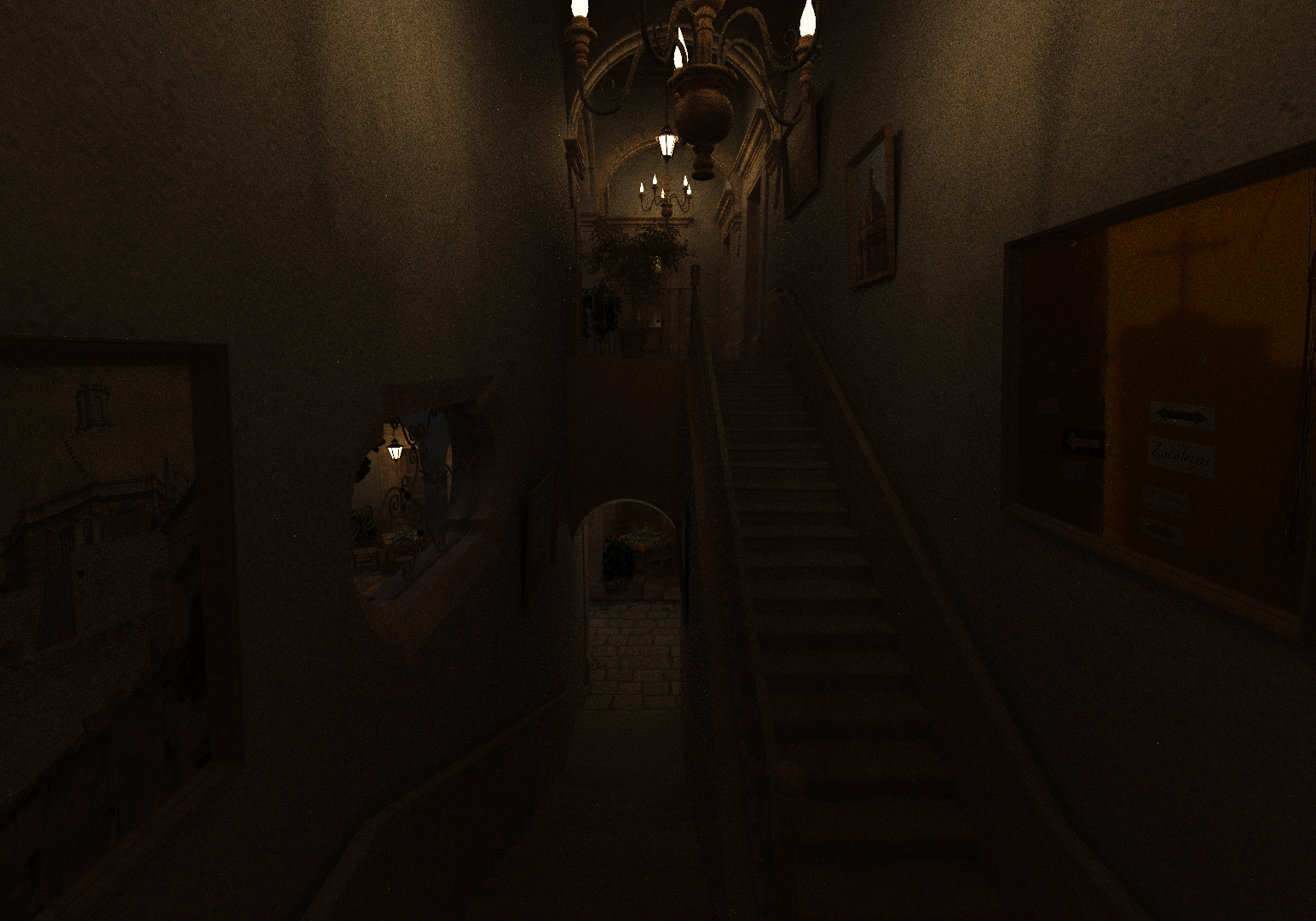

The same walkway from a different vantage along the corridor. By day, sun rakes across a different set of surfaces — the inner wall, the framed paintings, a wooden chair — while the arches still frame the courtyard’s dappled foliage beyond. At night the chandelier closest to the camera dominates the foreground, with each successive fixture down the corridor becoming a smaller, dimmer pool of light — the alternating bright-dim rhythm the area-light CDF resolves naturally.

The interior stairwell — a challenging scenario where almost all illumination enters through a single ornate window. The wrought-iron scrollwork casts intricate shadows onto the steps, and the rest of the stairwell falls into deep shadow.

The same stairwell at night. Now the chandelier and a wall-mounted lantern visible through the archway take over, revealing the architecture that was hidden in shadow during the day — the framed paintings, the ornamental arch at the landing, the staircase detail.

Galleries — Lumberyard Bistro

The Bistro is a dense streetfront with a café facade, outdoor seating, decorative string lights, and a multi-level interior. The exterior carries about 2.8 million triangles across 126 materials; the interior another 1.1 million across 67 materials.

The street corner. Daytime IBL lights the cobblestone plaza, the green café shopfront, the red awnings, and the decorative string lights overhead. At night the same view is lit by the emissive café windows and the colored string lights against a milky-way sky — a transition from IBL-dominated to area-light-dominated illumination across many small distributed emitters.

A tighter view near the Vespa. By day, afternoon sun catches the upper facade and casts shadows from the wrought-iron balcony onto the yellow stucco. At night, the emissive window panels dominate, casting bright warm spill onto the sidewalk and outdoor furniture.

The bar-side dining room rendered with the full exterior building geometry intact — daylight reaches the interior only through the actual window openings and the open doorway, with the surrounding street volumes contributing reflected fill onto the floor and the lower half of the back wall. By day, the strong directional rake from the windows is the primary light, and the deep interior falls into long shadow. At night the wall sconces and bar pendants take over; the framed windows now look out into the lit street where shopfront panels and string lights are doing their own emissive work.

A second camera angle through the same room. The sun reaches a different combination of surfaces — the polished bar top, the row of stools, the glassware on the back shelf — and the shadow geometry shifts accordingly. At night the dominant fixtures change with the angle: a different pendant carries the foreground, a different sconce defines the wall behind the bar, and the dark zones between fixtures fall in different places.

A path through the courtyard

With every static-image piece in place — IBL, area lights, scene cache, hot reload, denoising — extending to a movie flythrough is mostly an automation problem. A keyframe file specifies camera position, orientation, IBL parameters, and emission scale at a sequence of timestamps:

# time x y z yaw pitch iblRot iblInt emitScale

0.0 13.610 1.838 11.396 -1.3 10.0 -40.0 1.0 2.0

5.0 14.390 1.319 0.013 0.3 -0.9 -40.0 1.0 2.0

8.0 8.553 1.435 -2.019 160.0 -0.0 -40.0 1.0 2.0A CameraPath::evaluate(frame, fps) converts a frame number to a time, locates the enclosing keyframe segment, and interpolates with Catmull-Rom splines for position and orientation:

static float catmullRom(float p0, float p1, float p2, float p3, float t) {

float t2 = t * t, t3 = t2 * t;

return 0.5f * (

(2.0f * p1) +

(-p0 + p2) * t +

(2.0f * p0 - 5.0f * p1 + 4.0f * p2 - p3) * t2 +

(-p0 + 3.0f * p1 - 3.0f * p2 + p3) * t3

);

}Catmull-Rom is the right curve here because it passes exactly through every keyframe (so the camera lands where the artist placed it) and guarantees C1 continuity at the joins (so velocity doesn’t kick at every keyframe boundary). Yaw is interpolated with an angle-aware variant that unwraps neighbors before splining — without that, crossing the ±180° boundary causes the camera to take the long way around.

Movies are rendered at 50 passes per frame and denoised offline. OIDN works well per frame in isolation, but lacks any temporal context — applied frame-by-frame, small differences in how noise is resolved between adjacent frames produce visible flickering. For the level of polish needed at this project’s stage, the trade-off is acceptable; for higher production quality a temporal denoiser would replace it.

The camera moves through the courtyard at ground level, passing from open sunlight into the shaded colonnade. Sunlight rakes across the cobblestones and dining tables, casting hard shadows from the tree canopy and wrought-iron furniture; as the camera enters the arcade, the lighting transitions from direct sun to indirect bounce off the stone columns and floor.

The same arcade at night, lit entirely by the wall-mounted lanterns. Each lantern casts a warm pool of light onto the nearest columns and floor tiles, with rapid falloff between fixtures creating alternating bright and dark zones along the corridor. The courtyard beyond the arches is nearly black — only faint starlight from the night HDRI fills the open space.

The upper walkway under afternoon light. The sun rakes across the tile floor through the open arches; the beamed ceiling and interior wall stay shaded, lit only by the bounce off the floor and the soft sky-fill through the arch openings. Foliage in the planters clips into hard-edged silhouettes as the alpha-cutout leaves catch the directional light.

The upper walkway at night, lit by the wrought-iron chandeliers as the sole sources. Their warm glow illuminates the beamed ceiling and spills onto the paintings and furniture below; the arches frame a dark sky with visible stars. The camera pans down into the courtyard at the end, revealing the lantern-lit ground floor far below — the entire scene lit by dozens of small emissive triangles with no IBL contribution.

Where the path tracer lands

Five chapters ago this renderer could only answer one question: what does this ray hit? It now answers, in the same loop, what does this ray see, after the light has had a chance to do everything it does — refraction through colored glass, soft inter-reflection across colored walls, sun rakes through alpha-cutout foliage, lantern light spilling through stone arches at night.

The math hasn’t grown more complex than the rendering equation. What changed is the bookkeeping around it: an acceleration structure to make millions of intersections tractable, a Monte Carlo estimator to make light’s stochastic behavior averageable, a microfacet model to describe surfaces the eye recognizes, a transmission lobe to admit glass, an importance-sampled environment to make outdoor scenes converge, an aggregated area light to make distributed indoor lighting work, a binary cache and a hot-reload loop to make any of it iteratable.

This series sits on the boundary of what a CPU path tracer can do. The next horizons are GPU implementations, real-time techniques that approximate the same integrals interactively, and denoising that’s aware of motion — each one trading some of the offline path tracer’s correctness for the responsiveness needed to put a renderer in someone’s hands. But the foundation under all of those is the loop that started in chapter one with a single ray, a single t, and a question about what it was about to hit.